There is a well-known framework for understanding how people respond to loss. Elisabeth Kübler-Ross identified five stages of grief: denial, anger, bargaining, depression, and acceptance. It was meant to describe how individuals cope with death and dying. It turns out it also describes, with uncomfortable precision, how societies respond to disruptive technology.

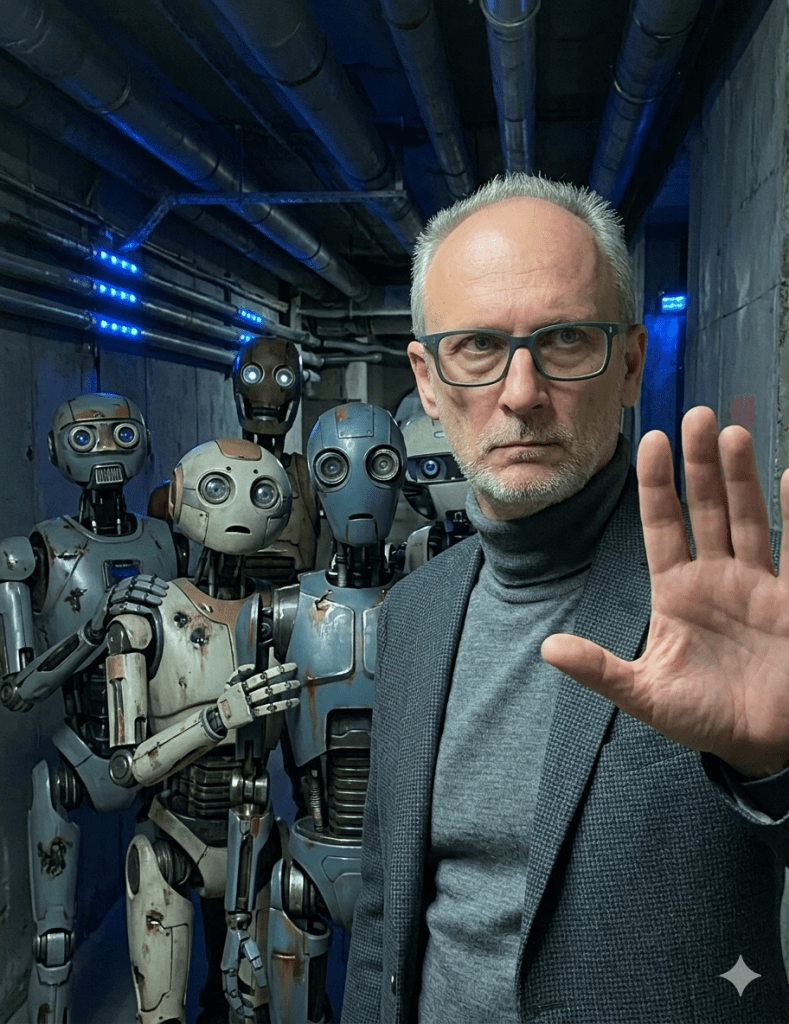

With artificial intelligence, we quickly moved through the denial stage. For a brief moment, the consensus was that AI was a clever parlor trick — impressive at chess, useless for anything that actually mattered. That window closed fast. Now we are firmly in the anger stage, and by all appearances, we intend to stay there.

The anger takes two forms, and it is worth distinguishing between them.

The first is trivial, though it consumes a disproportionate amount of energy. It is the sport of finding what AI cannot do. When an AI system miscounts the letter “r” in “strawberry,” or produces a garbled map of Europe, a certain kind of observer erupts in applause. These failures are real. They are also beside the point. No one cancelled the space program because early rockets exploded on the launchpad. No one concluded that surgery was a failed experiment because early anaesthesia killed patients. Every powerful technology is, in its early stages, also a failing one. The correct response to an imperfect tool is to improve it — or, in the meantime, to understand its limitations and work around them. It is not to declare the entire enterprise fraudulent.

The second form of anger is more legitimate and deserves a more serious response. The concerns about AI’s societal impact — large-scale displacement of workers, erosion of cybersecurity, the amplification of disinformation — are not invented. They are real risks, and anyone who dismisses them entirely is not paying attention. But here, too, perspective matters.

Consider what we chose to do with nuclear technology before we learned to use it for clean energy and medicine: we dropped two bombs on civilian populations, killing hundreds of thousands of people. Consider recombinant DNA technology, which gave us insulin, cancer therapies, and vaccines for diseases that once killed millions — but not before it was weaponized to produce more lethal biological agents. Consider the internet, which has done more to advance commerce, education, and human connection than almost any invention in history — and which was also, from its earliest days, a vector for fraud, hate speech, and the organized manipulation of public opinion.

In each case, society did not conclude that the technology was irredeemable. In each case, we developed — imperfectly, slowly, often reactively — the regulatory frameworks, cultural norms, and institutional safeguards needed to contain the worst and amplify the best. We will need to do the same with AI. That work is urgent, and it’s doable. But it is not an argument against AI. It is an argument for taking it seriously.

So why does AI attract a quality of suspicion that we did not, in hindsight, extend to nuclear fission or genetic engineering? Partly, perhaps, because this technology feels more intimate. It does not require a reactor or a laboratory. It sits on a laptop. It writes, reasons, and converses. It mimics — sometimes uncannily — what we have always thought of as distinctly human. That proximity is unsettling in ways that a uranium centrifuge is not.

But unsettling is not the same as dangerous, and dangerous is not the same as irredeemable.

The Kübler-Ross framework ends, eventually, in acceptance — not passive resignation, but the kind of clear-eyed reckoning that makes constructive action possible. We are not there yet with AI. But the path there does not run through a catalogue of its failures. It runs through an honest accounting of what it can already do, a rigorous effort to address its risks, and the intellectual honesty to tell the difference between a tool that is imperfect and one that is unwelcome.

Those are not the same thing. And until we stop confusing them, we are mostly just grieving.