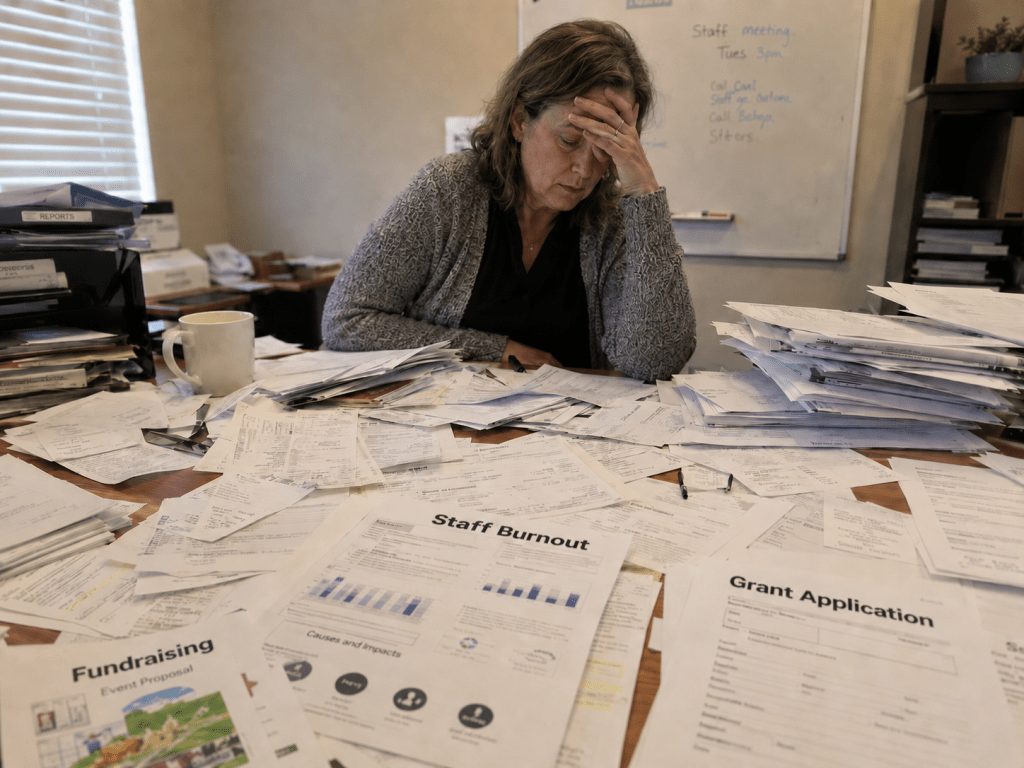

Earlier this spring, I wrote about the structural pressures bearing down on the nonprofit sector. The numbers are not comfortable. According to the State of Nonprofits 2026 report, a national survey of 380 nonprofit leaders published by the Center for Effective Philanthropy, nearly three-quarters of nonprofit CEOs report that demand for their services has increased since January 2025. Almost 60% say it has become harder to secure foundation grants. More than a third have seen reduced government funding. About 30% have cut staff.

These are not outlier findings. They describe a sector caught in a widening gap between what communities need and what organizations can provide. And the tools available to close that gap are becoming less reliable.

One of those tools is the foundation grant. And it is precisely here that I want to slow down, because I think the difficulty many nonprofits are experiencing with grant funding is not quite what it appears to be.

A Fictional Case — and a Real One

What follows is a diagnostic exercise built around a fictional organization: Harbor Bridge Family Services, a human-service nonprofit with an 18-person staff, an annual budget of approximately $2.8 million, and a 25-year record of serving low-income families, immigrants, and older adults in its community.

Harbor Bridge is not real. But the situation it represents is.

I constructed the case to reflect conditions that appear with increasing regularity in the sector: rising service demand, a development function under strain, a grant success rate in visible decline, and leadership already working beyond capacity. The State of Nonprofits 2026 data suggest that the narrative I created is not a composite of edge cases. It is damn close to reality.

My purpose in building the case was not to demonstrate that I can describe nonprofit problems accurately. It was to test whether a structured diagnosis process — the kind of rigorous, assumption-interrogating problem definition I have been writing about recently — could produce genuine insight when applied to a sector-specific situation. Not whether the process could just name the problem, but whether it could reframe it.

It could. And the reframe matters.

The Pattern Harbor Bridge Tried to Escape

Harbor Bridge’s data tells us that it has a problem with the grant application process:

| Year | Applications | Awards | Total Requested | Total Awarded |

| 2022 | 14 | 8 | $920,000 | $610,000 |

| 2023 | 16 | 7 | $1,100,000 | $575,000 |

| 2024 | 19 | 5 | $1,350,000 | $420,000 |

| H1 2025 | 11 | 2 | $760,000 | $145,000 |

More applications each year. Fewer awards. Smaller amounts. Harbor Bridge was, as its staff described it, running harder and falling further behind.

The organization had not been passive in response. It had tried five distinct interventions: submitted more applications, brought in an outside grant writer, added more client stories to proposals, built an internal impact dashboard, and launched a monthly donor campaign. Each response was reasonable. None of it helped much.

That pattern — multiple intelligent attempts, no sustained improvement — is one of the clearest diagnostic signals suggesting that the stated problem may not be the real one. The organization has been solving something accurately described but not accurately defined.

The Trap Inside the Data

The structured diagnosis, conducted using RCFinder — an AI-assisted tool that establishes a neutral problem statement before any analysis begins, then generates and deepens a structured hypothesis set — identified two primary root causes. They were not independent. They formed a loop.

Funding pressure and rising service demand were pushing Harbor Bridge to submit more applications. More applications consumed the time and relational bandwidth needed to qualify funders carefully, cultivate relationships, and position the organization as a genuine mission partner rather than a grant applicant. That reduced capacity produced lower-fit applications. Lower-fit applications produced lower win rates and smaller, more restricted awards. Smaller awards intensified the funding pressure, which pushed the organization to submit still more applications.

Every response Harbor Bridge had tried was operating entirely within this loop. Bringing in a grant writer improved proposal quality but did not address funder fit or relationship depth. Submitting more applications increased volume — and made the capacity problem worse. The loop was self-reinforcing, and every attempt to escape it through the obvious responses was, in effect, tightening it.

The core finding: Harbor Bridge did not have a grant-writing problem. It had a funding strategy problem — one that had been progressively hidden behind a grant-writing problem for three years.

Why This Matters Beyond Harbor Bridge

I want to be precise about what this diagnostic finding does and does not claim.

It does not claim that the external environment is irrelevant. The State of Nonprofits 2026 data is real, and the tightening of foundation grant markets is a genuine structural shift. Harbor Bridge was not imagining this difficulty.

What the diagnosis reveals, however, is that the organization’s response to external pressure can create a secondary problem that compounds the original one — and that the secondary problem, once established, can become the dominant driver of poor outcomes. Harbor Bridge’s declining win rate was not caused only by a tighter grant market. It was being actively worsened by a volume-driven response that was consuming the relational capacity on which grant-making depends.

This distinction matters because it changes what the right response looks like.

If the problem is purely external — funder priorities have shifted, the market is harder — then the appropriate response is largely strategic repositioning: find different funders, develop alternative revenue streams, adjust program offerings. These are real and necessary conversations.

But if the problem is partly internal — a self-reinforcing loop that is destroying the relational infrastructure that grants require — then the most urgent response is to stop the loop before anything else.

And stopping the loop requires first seeing it clearly.

What Diagnosis Made Possible

Once the loop was visible, the path forward became considerably more tractable. A second tool, RCSolver, generated a set of recommendations organized by implementation readiness.

The immediate priority was not to write better grants. It was to stop the capacity drain. That meant implementing a Go/No-Go qualification gate before any new application was begun — a simple filter based on funder fit, relationship status, and strategic alignment. It meant protecting existing funder relationships above all else, because renewals are won on relationship depth, not proposal quality. And it meant building a systematic process for learning from recent declines, to understand what differentiated funded applications from unfunded ones.

Over the following weeks and months, the work would shift toward restructuring the pipeline: prioritizing renewals and warm relationships over cold outreach, repositioning the organization as a funder partner rather than a grant applicant, and gradually rebuilding the relational infrastructure that had been consumed by volume-driven grant-seeking.

None of these recommendations required Harbor Bridge to become a fundamentally different organization. They required it to stop doing one thing — treating grant volume as a response to grant pressure — and start doing something else: treating funder relationships as the asset that makes grants winnable in the first place.

The Diagnostic Question Worth Asking

Earlier in this series, I wrote about the double bind facing resource-constrained nonprofits: the organizations that can least afford to solve the wrong problem are also the least equipped to invest in defining the right one. The Harbor Bridge case is, among other things, an illustration of what that bind looks like from the inside.

Harbor Bridge’s leadership knew something was wrong. They had tried to fix it, repeatedly, with reasonable tools. What they lacked was not effort, intelligence, or commitment, but a structured way to step outside the problem and ask the question that the pressure of the situation made nearly impossible to ask: Are we solving the right problem?

That question — asked before more resources are committed to the existing approach — is worth more than any number of improved proposals.

If your grant success rate is declining despite increased effort, the instinct to work harder is understandable. But before you hire the grant writer, submit more applications, or redesign your impact metrics, it is worth pausing long enough to ask: is this a grant-writing problem? Or is it something else, wearing a grant-writing problem face?

The answer will determine whether the next round of effort moves you forward — or tightens the loop.